Key ways modern data platforms will reshape asset management

This article was also published as: “Architecting Alpha: How modern data platforms will reshape asset management”

The asset management industry stands at a critical inflection point

Private assets are exploding. ESG demands are intensifying. AI is pervasively transforming across every domain. Data costs are spiralling out of control, and through it all, firms must still deliver alpha while navigating a labyrinth of regulatory requirements and client expectations.

The infrastructure that worked for traditional equity and fixed income portfolios is buckling under the weight of this new reality – especially for firms juggling outsourced ABOR and IBOR systems across hybrid operating models.

The challenge: bridging a fragmented data ecosystem

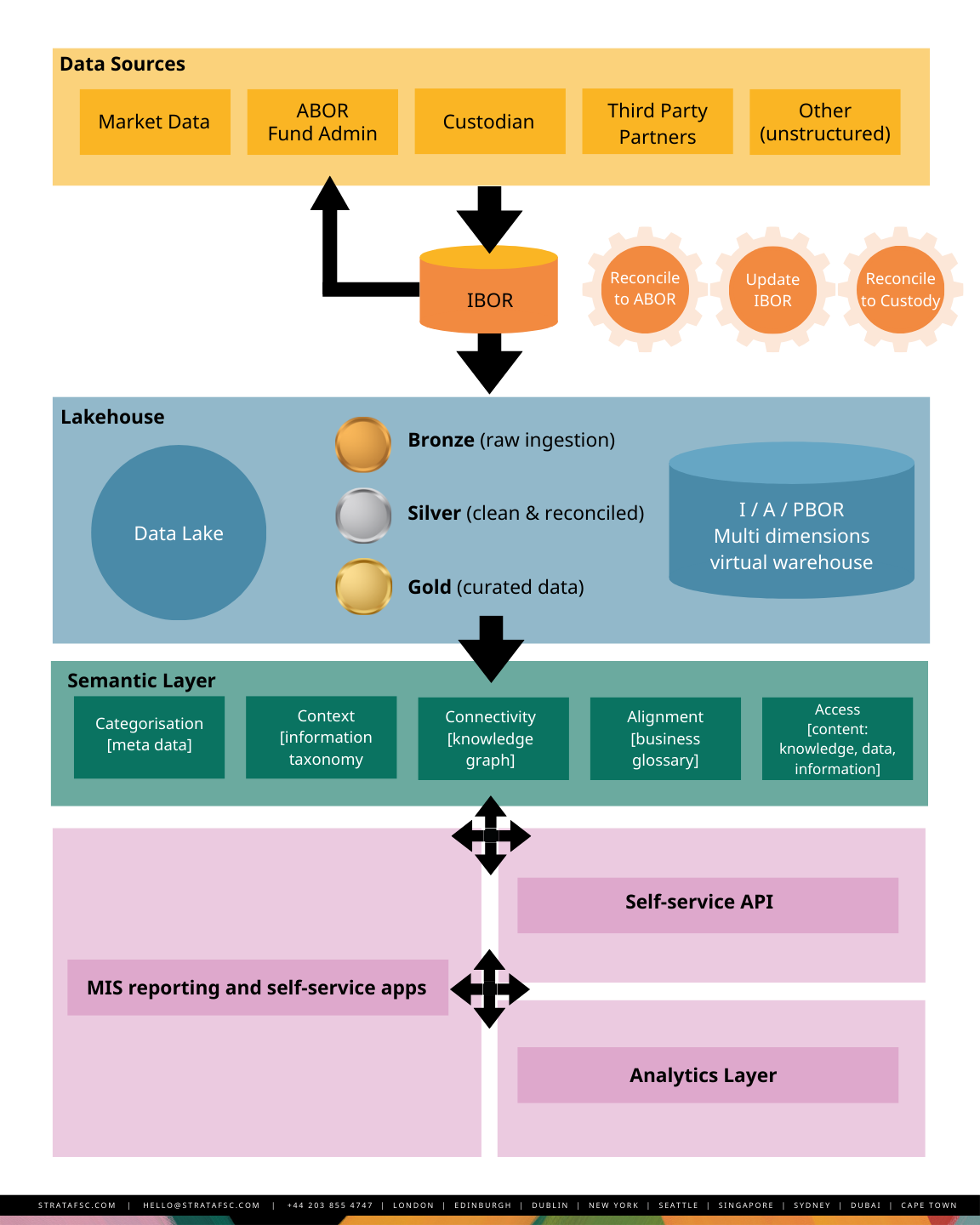

Today’s architecture must seamlessly bridge: a consistent book or records including internal trading systems (OMS) and external execution platforms (EMS), disparate and unstructured datasets, unreliable external service providers, data-hungry scientists, multiple asset and inconsistent entity identifiers, cloud infrastructure, and mounting security threats – all while delivering the real-time responsiveness that front-office decision making demands.

The modern data architecture isn’t simply a technology upgrade; it is a strategic asset that determines whether firms can survive and compete in a new modern data landscape and the business case for architectural transformation is compelling.

Core architectureal principles

The data lake: your foundation

The modern architecture excelling across FinTech represents a fundamental break from traditional data warehouses. It’s cloud-native. It’s built on a Data Lakehouse and it’s governed by Data Mesh principles.

At the centre sits a well-organized, cloud-native data lake that ingests everything: structured data, semi-structured data, unstructured data – all at scale. Unlike relational databases optimised for transaction processing, this lake handles FTP files, API data (JSON, XML, CSV), PDF contracts, due diligence images, NLP-processed call transcripts, and real-time market feeds simultaneously.

The Lakehouse employs a Medallion Architecture – progressively refining data quality from raw to production-ready. This creates a single source of truth while optimising data for analytics, machine learning, and operational use.

Key components

- Unified platform: The Lakehouse combines the flexibility of a Data Lake (raw, unstructured data) with the governance and structure of a Data Warehouse.

- IBOR as central hub: Within the Lakehouse, firms build a real-time investment book of record (IBOR). This hub consolidates trades, cash, and holdings from systems like CRD and Aladdin, giving the entire firm a unified, intraday portfolio view. No more delayed or inconsistent data.

For firms with outsourced ABOR, the data lake becomes critical. ABOR providers deliver end-of-day NAVs, reconciled positions, and compliance reports – but that latency creates dangerous blind spots for intraday risk and investment decisions.

The solution?

Bidirectional synchronization: pull ABOR data for reconciliation while feeding your outsourced provider complete transaction feeds, corporate actions, and cash movements from your onsite IBOR.

Data mesh: decentralised ownership, accelerated agility

Data Mesh solves the organisational bottleneck by flipping the ownership model:

- Data as a product: Ownership moves to business domains. Your data science team owns and governs their “alternative data feed” as a secure, standardised product.

- Self-service via APIs: data flows through standardised APIs. Portfolio managers and data scientists access validated data products directly – no waiting for IT to build custom pipelines. Quants can rapidly ingest, profile, and test new datasets in a compliant environment, accelerating alpha discovery.

- Unified governance: A central governance layer enforces security, privacy, and quality standards across all decentralised products. You gain agility without introducing regulatory or operational risk.

The IBOR: your operational source of truth

The modern IBOR operates independently of back-office schedules, bypassing the ABOR bottleneck for intraday front-office needs.

The ABOR bottleneck: ABOR requires finalised, audited figures for official NAV. This dependency on complex accounting data creates intolerable delays. Liquid asset managers can’t confidently trade without knowing the accurate status of illiquid positions.

The IBOR serves portfolio managers, traders, and risk officers who need real-time positions, P&L, and exposure metrics.

Modern solutions handle complex valuations with bespoke engines, support multiple share classes and fund structures, and provide stock-level performance and attribution across asset classes.

Modern IBOR Capabilities:

- Master data management: centralised, consistent reference data.

- Front office valuations: Integrates proprietary models to generate daily NAVs and proxy valuations for illiquid and curve-driven fixed income assets. Essential for accurate positions, trade analysis, cross-asset VaR, sensitivity analysis, and compliance monitoring.

- Liquidity Forecasting: Calculates an independent cash ladder, projecting future cash flows (capital calls and distributions). Liquid asset managers can prepare for cash needs in advance, keeping liquidity managed.

Crucially, the IBOR cannot be a data island. It must function as a specialised component within your broader architecture.

For illiquid/private assets, where valuations may update on a delayed basis i.e. quarterly, but underlying operational metrics arrive monthly, the architecture must support and orchestrate data from models stored in the data lake.

Emerging capabilities:

- Intelligent Ingestion: NLP and ML automatically extract key data from unstructured sources – deal documents, portfolio company financials, capital call notices, eliminating weeks of manual entry.

In addition, and where required, new sophisticated architecture are employing real time data snaps into the lake like Aladdin’s ADC, or event-driven messaging, where every trade execution, position update, or cash movement publishes events to a central message bus.

This allows the data lake, risk systems, compliance monitors, and data science platforms to consume IBOR data in near-real-time.

Bridging IBOR and outsource ABOR

The reconciliation engine

The seam between onsite IBOR and outsourced ABOR is your highest-risk area for data quality failures. The reconciliation engine must operate continuously, comparing positions, cash balances, and corporate actions between systems with configurable tolerance thresholds.

In a workflow-managed environment, ML models identify systematic breaks and automatically apply corrections for known patterns, escalating non-systematic discrepancies for human review.

Multi-dimensional hierarchy

The critical architectural decision: determining the golden source for each data domain. IBOR typically owns pre-trade positions and intraday P&L. ABOR owns official NAV, audited financials, and regulatory reporting.

But for analytics spanning both domains – like multi-period attribution combining daily IBOR returns with monthly ABOR valuations – the Lakehouse must maintain a unified timeline of position snapshots, corporate actions, and valuation events with clear precedence rules by source.

The hierarchy models must consider source, data domain, and effective period to determine precedence. This complexity intensifies as IBOR feeds move to near-real-time updates.

Enabling AI and data science

The Python / R-Centric analytics layer

Data science teams need fundamentally different infrastructure than traditional investment applications. They need freedom to experiment with new data sources, iterate rapidly on models, and deploy successful experiments to production – without extensive IT oversight.

The architecture should provide a managed Jupyter environment connected directly to the data lake, with pre-configured access to Python and R libraries for financial analysis, statistics, machine learning, and alternative data processing.

Feature stores: Early adopters recommend centralized repositories of pre-calculated metrics and transformations that ensure consistency between model training and production inference. When a quant develops a sentiment indicator from earnings transcripts, that same feature engineering pipeline must be available to trading solutions making real-time decisions.

Feature stores also solve temporal consistency: ensuring back-tests reflect only information available at historical points in time, avoiding look-ahead bias that plagues many AI implementations.

The architecture should support model versioning, A/B testing frameworks, and automated monitoring that detects data drift or degraded performance. As AI commoditizes, alpha comes not from model sophistication but from proprietary data and the operational excellence to deploy models rapidly across the investment process.

Alternative and private data integration

The alternative data market’s explosive growth creates both opportunity and operational chaos. Firms must ingest data from numerous vendors and specialist managers, each with unique schemas, delivery mechanisms, and quality characteristics.

The architecture requires a data catalogue with automated lineage tracking – allowing analysts to understand data provenance, transformations, refresh frequencies, and quality metrics before incorporating sources into investment processes.

Private assets create chaos: non-standardised valuations, breaks in reporting systems, gaps in ESG metrics, operational KPIs, and financial statements.

The architecture must accommodate bespoke data extraction from PDF reports, email attachments, and portfolio company management systems. NLP pipelines extract structured data from unstructured documents, but human-in-the-loop validation workflows ensure accuracy before data reaches portfolio managers or regulatory reports.

Data governance and quality: the unsexy foundation that makes everything work

Data governance isn’t glamorous. But it’s fundamental to architectural success. The market research numbers tell the story:

- “Investment professionals spend up to 40% of their time on data reconciliation rather than investment decisions”

- “Data management costs for multi-asset strategies run 18-23% higher than traditional portfolios”

- “70%+ of asset managers identify data quality as a primary concern.”

Future-proofing your architecture means embedding quality controls at every layer:

- Schema validation at ingestion: Catch errors before they propagate

- Automated anomaly detection: Statistical process control flags issues in real-time

- Data quality scorecards: Business users see quality metrics, not just IT

For known problem areas – ESG data, alternative datasets – the architecture should support manual overrides with full audit trails. Investment teams can correct source data errors while maintaining regulatory compliance.

Note, not all data can be updated at source system hence the Medallion architecture.

Master data management becomes critical when bridging IBOR and outsourced ABOR.

The architecture requires a golden copy master data service that both systems reference, preventing identifier mismatches and entity duplication that plague multi-system environments.

In our experience, modern IBOR tooling provides a compelling use case for master data management between IBOR and ABOR. But the gaps created by multiple other data sources demand oversight and rigor.

Infrastructure and security: performance without compromise

Modern architectures must handle AI workloads while managing costs. Cloud-native designs with decoupled storage and elastic compute, allow firms to scale GPU resources for intensive computations while maintaining lower costs for operational workloads. For latency-sensitive applications, real-time risk calculations during trading – hybrid architectures with edge computing deliver sub-millisecond response times.

Security in the age of AI: The architecture must give data scientists broad data access while preventing unauthorized disclosure of material non-public information or proprietary strategies.

The foundation: role-based access controls, data masking for sensitive fields, comprehensive audit logging.

For AI models accessing insider information or pre-publication research, the architecture must enforce ethical walls and guardrails that prevent information leakage to other investment teams.

The strategic payoff: competitive advantage in three dimensions

This modern data architecture delivers competitive advantages that justify the transformation cost and complexity:

1. Real Time Risk Management

Calculate VaR and monitor complex regulatory compliance (UCITS, Solvency II) in real-time across all assets – including illiquid positions. No blind spots. No delays.

2. Alpha Acceleration

The Lakehouse and Data Mesh eliminate the IT/Quant agility gap. Models developed in Python/R move seamlessly from sandbox to production. Firms exploit transient market opportunities using advanced models.

3. Total Portfolio Oversight

Portfolio managers gain a unified, trusted, near-real-time view across all public and private assets. Investment and risk decisions use the same accurate data. Reconciliation risk plummets. Returns maximise.

The bottom line…

By adopting a cloud-native, domain-driven architecture, asset managers are transforming from technology consumers using packaged IBOR solutions into agile, data-driven technology ecosystems.

Their strategies can finally keep pace with the accelerating demands of modern finance.

The question is not whether to transform. It is whether you can afford not to.

Andrew van Osch

SENIOR MANAGER